8 Bit Vs 10 Bit

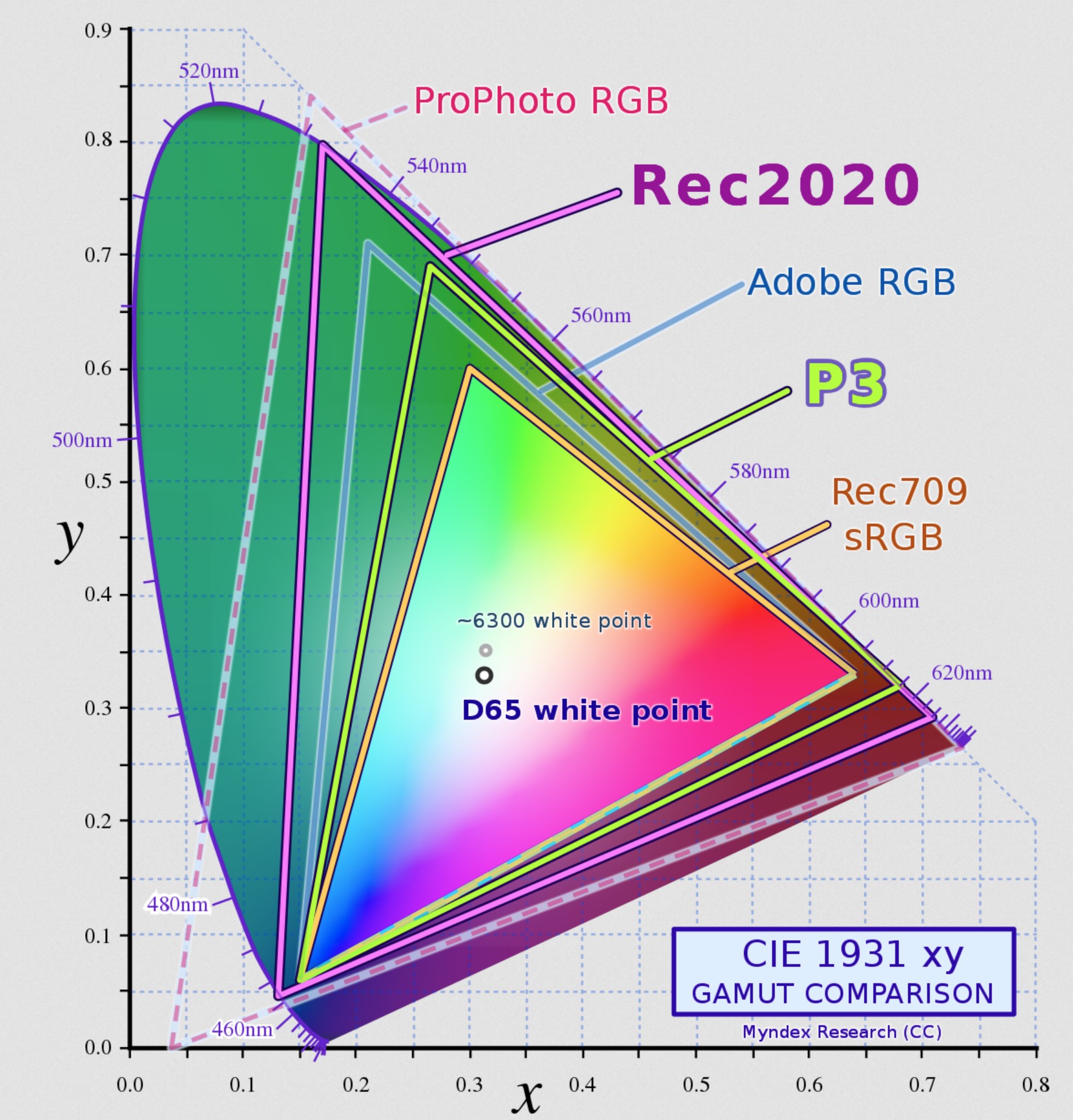

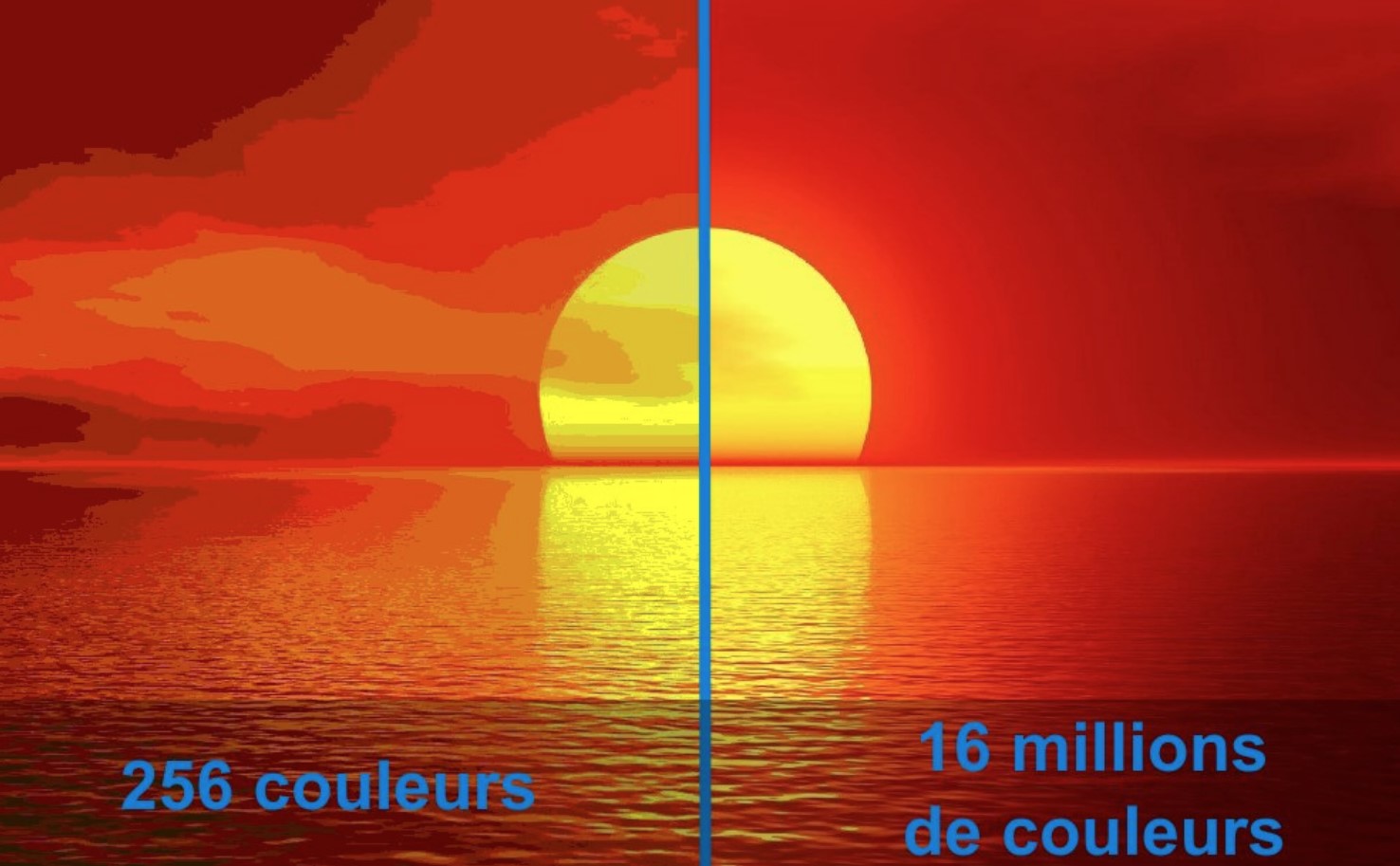

8 Bit Vs 10 Bit - So its like this, i have an asus pg27uq monitor. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option. 10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. It is known that some panels that receive a 12 bit. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. No it won't increase the visual quality much simply because it can display 10 but colour. If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and full rgb 8 bit for sdr, both @ 4k.

10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and full rgb 8 bit for sdr, both @ 4k. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option. So its like this, i have an asus pg27uq monitor. It is known that some panels that receive a 12 bit. No it won't increase the visual quality much simply because it can display 10 but colour.

It is known that some panels that receive a 12 bit. So its like this, i have an asus pg27uq monitor. No it won't increase the visual quality much simply because it can display 10 but colour. If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and full rgb 8 bit for sdr, both @ 4k. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. 10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option.

Archimago's Musings QUICK COMPARE AVC vs. HEVC, 8bit vs. 10bit

By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. No it won't increase the visual quality much simply because it can display 10 but colour. So its like this, i have an asus pg27uq monitor. I noticed that when i tinker around with the hz levels on the nvidia.

8 bit vs 10 bit video Explained EASY to understand. YouTube

No it won't increase the visual quality much simply because it can display 10 but colour. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option. By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. So its like this,.

8bit versus 10bit screen colours. What is the big deal? (AV guide

I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option. No it won't increase.

💥 BIT DEPTH o PROFUNDIDAD DE COLOR 💥 ¿Que son 8 bits / 10 bits / 12

So its like this, i have an asus pg27uq monitor. It is known that some panels that receive a 12 bit. If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and full rgb 8 bit for sdr, both @ 4k. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr.

Hölle einheimisch benachbart 8 bit vs 10 bit monitor gaming Reim Bereit

I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). No it won't increase the visual quality much simply because it can display 10 but colour. If you're limited to hdmi 2.0 bandwidth, then.

True 10bit vs 8bit + FRC Monitor What is the difference? Isolapse

So its like this, i have an asus pg27uq monitor. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). It is known that some panels that receive a 12 bit. 10 bit colour.

8 Bit vs.10 Bit Video What's the Difference?

I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). So its like this, i have an asus pg27uq monitor. If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and.

The difference between 8bit and 10bit videography? MPB

If you're limited to hdmi 2.0 bandwidth, then 4:2:2 10 bit for hdr and full rgb 8 bit for sdr, both @ 4k. No it won't increase the visual quality much simply because it can display 10 but colour. By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. If.

10bit vs 8bit Color for Gaming Which One to Pick?

10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher)..

8bit versus 10bit screen colours. What is the big deal? (AV guide

So its like this, i have an asus pg27uq monitor. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option. 10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. It is known that some panels.

No It Won't Increase The Visual Quality Much Simply Because It Can Display 10 But Colour.

So its like this, i have an asus pg27uq monitor. By upgrading to 10 bit hdr, and watching 10 bit hdr content, you'll be sure to enjoy every scene in. I noticed that when i tinker around with the hz levels on the nvidia driver it changes from 10 bit (when on 98hz or lower) to 8 bit + dithering (when on 120 hz or higher). It is known that some panels that receive a 12 bit.

If You're Limited To Hdmi 2.0 Bandwidth, Then 4:2:2 10 Bit For Hdr And Full Rgb 8 Bit For Sdr, Both @ 4K.

10 bit colour display have (generally speaking) better looking colour display, so it might for example display deeper blacks or more virbrant colours but. If you're running hdmi 2.1 then rgb 10 bit @ 4k for either sdr or hdr is your best option.